Claude Code + AgentSEO: the fastest path from prompt to monitored workflow

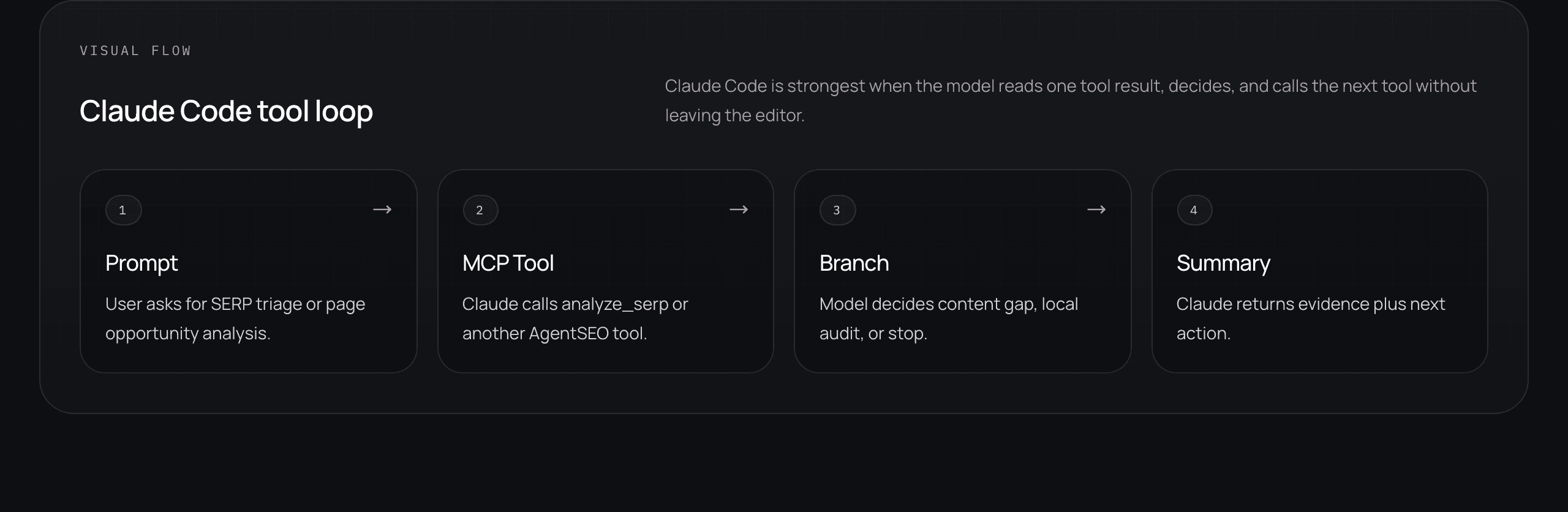

The real Claude Code opportunity for marketers is not one-off prompting. It is using a narrow tool loop to move from a question, to a grounded result, to a monitored workflow the team can keep running.

Hybrid builder-marketers and vibe marketers who want to turn Claude Code experiments into repeatable SEO workflows

Claude Code / workflow design

Most teams stop too early with Claude Code. They prove the model can answer a question once, maybe even call a tool once, and then they treat that as the win. It is not. The real win is getting from one useful prompt to a loop the team can keep running and trust.

That is where AgentSEO matters. Claude Code can use AgentSEO over MCP to inspect a SERP, branch into the next tool call, and return a usable recommendation. From there, the team can decide whether the loop deserves to become a monitored workflow instead of another clever demo.

Start with one real question

The first step is not a giant agent. It is a concrete question that the team already asks often.

The best Claude Code workflows usually begin with a very normal operator question. Is this query worth a comparison page. Did this page class lose visibility because of extractability or because of weak positioning. Which prompt set needs monitoring next. That kind of question is specific enough for a tool loop and useful enough to matter after the demo.

This is important because Claude Code becomes valuable when it shortens an existing decision loop. If the initial prompt is vague, the workflow usually stays vague too.

- Pick a repeated question from the real operating rhythm.

- Keep the scope narrow enough to inspect in one session.

- Define what a good output should help the team decide.

- Avoid starting with a full content-generation task.

Use AgentSEO for grounded branching

Claude Code gets much more useful when the model can read a real result and decide what tool should come next.

This is the core shift. Instead of asking Claude to reason from scraped pages and intuition, let it call AgentSEO over MCP. The model can inspect a SERP, decide whether the opportunity is editorial or local, branch into content gap or another check, and return a compact summary with the next action.

That pattern is what makes the workflow feel operational. The model is not just generating text. It is making a bounded decision on top of structured evidence.

Related reading

How vibe marketers can use Claude Code for SEO workflows without breaking production

Use this first if the team still needs the safer operating boundary for Claude Code before trying to push it toward a repeatable loop.

MCP vs API: when REST still wins for SEO workflows

Use this when you need the architectural split between editor-native tool calling and backend execution to stay clear.

- Use MCP so Claude can call the tool directly.

- Make the branch condition explicit in the prompt.

- Keep the output compact and action-oriented.

- Prefer deterministic next steps over open-ended ideation.

Use AgentSEO to inspect the query "best seo api for ai agents".

1. Run the appropriate search-intelligence tool first.

2. Summarize whether the query is better served by a comparison page, docs page, or blog post.

3. If comparison intent is dominant, suggest the exact next workflow I should run.

4. Return:

- one-sentence diagnosis

- evidence from the result

- recommended next action

- what should stay manualCapture the workflow shape before you automate it

The first repeated win is usually a human-in-the-loop loop that proves what should later be monitored.

Once Claude Code returns a result that actually helps, the team should not jump straight to full automation. First capture the workflow shape. What was the input. What tool calls happened. What branch condition mattered. What summary was genuinely useful. What downstream action followed. That gives the team something real to evaluate.

This is also where the playground and docs matter. Before building a larger runner, confirm that the output shape, latency, and actionability hold up outside the one lucky prompt.

Related reading

What should be measured in the playground before building a production workflow

Use this to validate the response shape and operational fit before the team promotes a Claude Code loop into something larger.

What to automate first if you want SEO leverage without content chaos

Use this to keep the first monitored workflow focused on signal and routing instead of overreaching into production too early.

- Document the exact loop that produced the useful result.

- Preserve the prompt, tool sequence, and branch condition.

- Check whether the output is reusable or was only lucky once.

- Treat repeatability as the gate into monitoring.

{

"diagnosis": "Comparison intent is stronger than educational intent for this query family.",

"evidence": [

"SERP titles are comparison-led",

"multiple results frame vendor tradeoffs directly",

"docs pages are present but not leading"

],

"recommended_next_action": "Draft a comparison-page brief and monitor citation presence weekly.",

"keep_manual": "final positioning language and proof claims"

}Move from session win to monitored loop

A workflow deserves monitoring when it answers a recurring question and the output can route a next action reliably.

The monitored version does not have to look like Claude Code itself. In many cases, Claude Code is where the team discovers the loop, and another system is where the loop runs repeatedly. The important part is that the workflow has graduated from a one-time prompt into something inspectable, reusable, and worth watching over time.

That is the fastest healthy path. Prompt, tool call, branch, summary, repeated question, monitored workflow. Not because every loop should become production. Because the good ones deserve to be promoted instead of re-invented every week.

Where AgentSEO fits

AgentSEO fits as the structured search-intelligence layer that helps Claude Code graduate from an interesting prompt to a usable workflow.

AgentSEO gives Claude Code grounded SEO tools over MCP and gives the team a cleaner path from one prompt session into a repeated workflow. That is what makes the pairing interesting for marketers. Less guessing, better branching, and a clearer handoff into something the team can actually monitor.

That is the difference between a novelty and an operating advantage.

Keep the workflow moving

Turn one good Claude Code prompt into a workflow worth keeping

AgentSEO helps Claude Code inspect real SEO signals, branch into the next check, and produce loops the team can later monitor instead of redoing by hand.

Daniel Martin

Founder, AgentSEO

Inc. 5000 Honoree and founder behind AgentSEO and Joy Technologies. Daniel has helped 600+ B2B companies grow through search and now writes about practical SEO infrastructure for AI agents, MCP workflows, and REST-first execution systems.

Continue this path

Builder-marketers using Claude Code

Start with the safest Claude Code workflow path, then move into monitored loops and the builder-marketer operating model.

Phase 3

How vibe marketers can use Claude Code for SEO workflows without breaking production

Claude Code can be a real marketing workflow surface if you use it for narrow tool-calling loops, not as a magic publishing machine. The safest starting point is research, triage, and workflow prototyping with AgentSEO over MCP.

Phase 3

What a builder-marketer workflow looks like with Claude Code, AgentSEO, and docs

The builder-marketer edge is not about acting like a full engineering team. It is about turning prompts, docs, tool calls, and small internal builds into a tighter organic growth system that actually ships.

FAQ

Questions teams usually ask next

What is the first sign that a Claude Code workflow is worth keeping?

It answers a recurring question with structured evidence and produces a next action the team would actually use again next week.

Does the monitored workflow need to keep running inside Claude Code?

Not necessarily. Claude Code is often the discovery surface. A later runner or monitored system may own the repeated execution.

Why use AgentSEO in this loop instead of generic web research?

Because grounded tool outputs give Claude Code something real to inspect and branch on instead of forcing the model to guess from scraped pages and loose context.

More in this topic

Claude Code and builder-marketer workflows

Claude Code

How vibe marketers can use Claude Code for SEO workflows without breaking production

Claude Code can be a real marketing workflow surface if you use it for narrow tool-calling loops, not as a magic publishing machine. The safest starting point is research, triage, and workflow prototyping with AgentSEO over MCP.

Claude Code

MCP for marketers: when Claude Code should call tools instead of generating another draft

The useful Claude Code question is not whether the model can write. It is whether the job needs grounded tool access. If the next step depends on a real SERP, a real page state, or a real workflow signal, tool-calling usually beats another draft.