How to measure AI visibility without lying to yourself

AI visibility is not one score. The practical job is to track mention rate, first mention, citations, and source mix across fixed prompt sets over time.

Growth and engineering teams trying to measure answer-engine visibility

AI visibility / LLM mentions

A lot of teams are asking the same question right now: how do we measure AI visibility without inventing vanity metrics? The answer is simpler than the tooling market makes it sound. You do not need one magic score. You need a repeatable way to observe whether your brand appears, where it appears, and what sources the models trust.

The most useful Reddit threads and SEO discussions I reviewed this week all pointed in the same direction. One-off prompt checks are noise. What matters is repeated observation across a fixed prompt set, a clear competitor frame, and enough discipline to separate mention, citation, and outcome signals.

Why one-off AI checks fail

If you only run a prompt once, you are not measuring a trend. You are sampling randomness.

Answer engines do not behave like a classic rank tracker. Model version changes, retrieval changes, prompt phrasing, and silent tuning changes all affect the output. That is why a screenshot from one day is not a KPI. It is just an observation.

The fix is to stop treating AI visibility like a single ranking position. Build a small, stable prompt set. Run it on a schedule. Compare the results over time. That is the first point where you can tell the difference between noise and movement.

- Keep prompts fixed for at least 8 to 12 weeks before you judge the trend.

- Track each platform separately. ChatGPT, Perplexity, Gemini, and Google AI Mode do not behave the same way.

- Separate broad prompts from narrow buying prompts. Category visibility and decision visibility are different jobs.

The KPIs that actually hold up

You need a small set of operator-friendly metrics, not a synthetic score that hides what changed.

The most useful KPIs are frequency-based and prompt-specific. Start with mention rate: how often your brand appears across repeated runs of the same prompt set. Then track first mention rate, because being listed first carries more weight than being buried in a list.

After that, track citations and source mix. If the model keeps citing your docs, your blog, Reddit, review sites, or competitor pages, that tells you where trust is accumulating. You can act on that. A blended 'AI readiness score' does not tell you what to fix next.

- Mention rate per prompt set.

- First mention rate for high-intent prompts.

- Citation rate and citation source distribution.

- Platform variance across ChatGPT, Gemini, Perplexity, and Google AI surfaces.

- Competitor displacement on prompts you care about most.

| Prompt family | Example prompt | Why it belongs in the set |

|---|---|---|

| Category | best seo api for ai agents | Shows whether you are present in broad market framing. |

| Comparison | AgentSEO vs DataForSEO | Shows whether buying-intent prompts still trust your owned assets. |

| Problem aware | how to measure ai visibility | Shows whether educational prompts create mention or citation entry points. |

| Workflow | how to build an seo agent | Shows whether operator-intent queries trust your product plus content system. |

| Brand + use case | AgentSEO Claude Code workflow | Shows whether hybrid builder-marketer queries map back to your docs and blog. |

What to ignore for now

The market is full of blended scores that sound precise but hide the real operating question.

I would ignore any metric that compresses everything into one number without showing the underlying prompts, platforms, and sources. That kind of number is useful for pitch decks and almost useless for actual work.

I would also be careful with AI visibility tools that mostly relabel old SEO metrics. Backlinks, crawl health, and rankings still matter, but they are not the same thing as whether a model names you in an answer today.

- Do not report one blended AI score to the executive team and pretend it explains causality.

- Do not mix classic search rankings and AI mentions into one trend line.

- Do not compare broad prompts and buying prompts as if they are equal-intent queries.

Build a weekly measurement loop instead

A small operating loop beats a giant dashboard that nobody trusts.

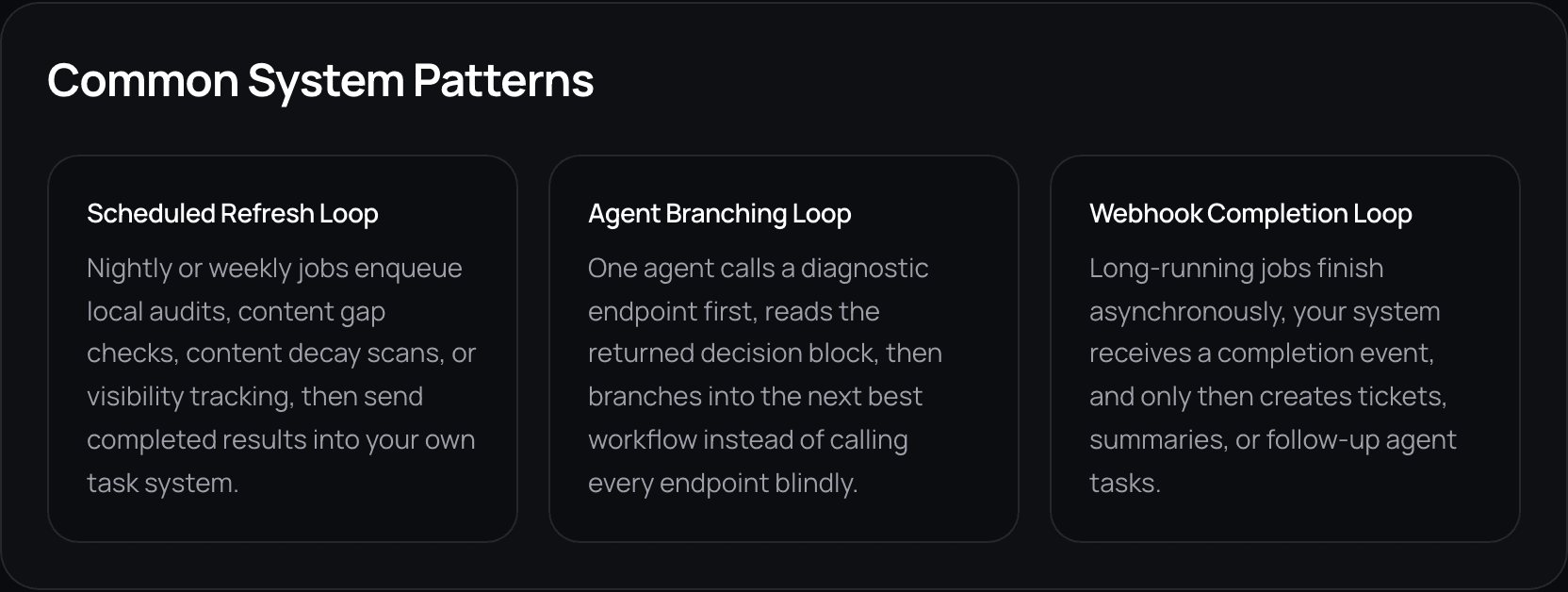

Pick 20 to 40 prompts that represent your category, your comparison set, and your buying moments. Run them weekly across the platforms that matter to you. Store the answer, the mention outcome, and the cited sources. Then review changes, not isolated events.

This is where a lot of teams get clarity fast. Weak mention rate with strong rankings usually points to representation or source-trust gaps. Strong mentions with weak rankings can point to narrow category understanding that still has not translated into durable web visibility.

Related reading

{

"prompt": "best seo api for ai agents",

"platform": "perplexity",

"brand_mentioned": true,

"first_mentioned": false,

"cited_urls": [

"https://www.agentseo.dev/blog/best-seo-api-for-ai-agents",

"https://www.agentseo.dev/docs/api-reference"

],

"competitors_present": ["DataForSEO", "Semrush"],

"run_date": "2026-05-01"

}Where AgentSEO fits in the measurement stack

The goal is to make AI visibility observable enough to act on, not mystical enough to debate forever.

AgentSEO fits best when you want to operationalize these checks as repeatable workflows instead of ad hoc research. The product can help you store runs, compare changes, and connect visibility checks back to the pages, entities, and workflows you control.

That is the real leverage here. Not a prettier score. A better operating loop.

Keep the workflow moving

Turn AI visibility into a workflow instead of a guess

Use AgentSEO to run repeatable prompt checks, store cited sources, and compare answer-engine visibility over time.

Daniel Martin

Founder, AgentSEO

Inc. 5000 Honoree and founder behind AgentSEO and Joy Technologies. Daniel has helped 600+ B2B companies grow through search and now writes about practical SEO infrastructure for AI agents, MCP workflows, and REST-first execution systems.

FAQ

Questions teams usually ask next

Can I measure AI visibility with one score?

You can create one, but it will hide the useful detail. Mention rate, first mention, citation source mix, and platform differences are more actionable than a blended index.

How often should I run AI visibility checks?

Weekly is a good default for most teams. It is frequent enough to spot movement and stable enough to reduce overreaction to one-off answer changes.

What matters more, mentions or citations?

Both matter, but they answer different questions. Mentions tell you whether you entered the answer. Citations tell you which assets and surfaces the model trusted enough to reference.

More in this topic

AI visibility and AI search

AI visibility

Why you rank in Google but still are not cited in AI search

Ranking and citation are related, but they are not the same retrieval job. If your pages rank but never get named in AI answers, the usual gap is extractability, proof, or positioning clarity.

Content

How to write comparison pages that AI search can actually cite

Comparison pages are becoming more important because AI answers compress generic research. The pages that still win tend to be specific, opinionated, and easy to extract.