Why you rank in Google but still are not cited in AI search

Ranking and citation are related, but they are not the same retrieval job. If your pages rank but never get named in AI answers, the usual gap is extractability, proof, or positioning clarity.

SEO, product, and content teams trying to translate rankings into citations

AI search / citations

A page can rank well and still disappear inside AI answers. I keep seeing teams assume good Google positions should automatically turn into ChatGPT, Perplexity, or AI Mode visibility. That is not how the retrieval layer works anymore.

Google's current guidance is still grounded in classic SEO fundamentals, but the practical challenge has changed. The page now has to be both discoverable and easy for a model to extract, trust, and attribute.

Rankings and citations are different retrieval jobs

Classic rankings measure one kind of visibility. AI citations depend on whether the system can use your page as evidence.

Search rankings tell you whether a page is competitive in a search result set. AI citations tell you whether a model decided your page was useful enough to help construct an answer. Those are connected, but they are not identical.

This is why teams get confused. They improve rankings, see impressions go up, and still do not get named in AI answers for the same topic. The missing step is usually not another round of generic SEO. It is making the page easier to quote, easier to validate, and clearer about what it should be cited for.

Where the gap usually comes from

The most common failure points are clarity, extractability, and corroboration.

Current Google documentation says there is no special AI markup or extra AI file required to appear in AI features. The practical advice is still to make important content available in text, keep internal links strong, and make structured data match visible content. That is the baseline.

The harder part is what practitioners keep surfacing in community discussions. Many teams are technically crawlable already. They still fail because the page is fuzzy about category fit, weak on proof, or written in a way that forces the model to infer the key point instead of lifting it cleanly.

- The page does not state clearly what the company does, who it helps, and why it is credible.

- The answer is buried under long intros and vague framing instead of appearing early in plain language.

- Evidence exists, but it is scattered across the page instead of being tied to the claim.

- Off-site mentions, comparisons, and third-party validation are too weak to reinforce the on-page story.

Run a simple diagnosis ladder before you rewrite everything

Separate ranking, sourcing, naming, and conversion into distinct checks.

I would not jump straight into content rewrites. First check whether the page ranks, whether it gets surfaced as a source at all, whether your brand is explicitly named, and whether people convert after the visit. Those are four different questions.

Once you split the problem that way, the fixes become much more obvious. If you are ranking but not sourced, authority and evidence are the likely problem. If you are sourced but not cited by name, the page is often not quotable enough. If you are cited but nothing happens after the click, the issue moves into messaging and conversion.

Related reading

- Check classic rankings and impressions first so you know whether the page is discoverable.

- Check whether AI answers use your page as a source, even when they do not name you.

- Check whether the brand is explicitly cited in the answer output.

- Check whether the visit leads to downstream engagement, not just a vanity screenshot.

What to fix first on the page

Tighten the answer, the proof, and the internal context before adding more volume.

The highest-leverage page edits are usually simple. Move the answer closer to the top. Use short declarative lines. Make the page explicit about the use case, the audience, and the tradeoff. Then add proof that can be cited without forcing the model to stitch it together.

I would also review the internal links around the page. Google's published guidance still calls out internal discoverability, and it matters here too. A page that sits alone is harder to treat as part of a coherent topic footprint.

- Answer the core question in the first screenful, not after a long setup.

- Use headings that carry meaning instead of vague marketing language.

- Put evidence near the claim: examples, screenshots, data, or precise tradeoffs.

- Strengthen internal links from adjacent posts, docs, and comparison pages.

Where AgentSEO helps

AgentSEO fits best when you want to turn this into a repeatable workflow instead of occasional manual checking.

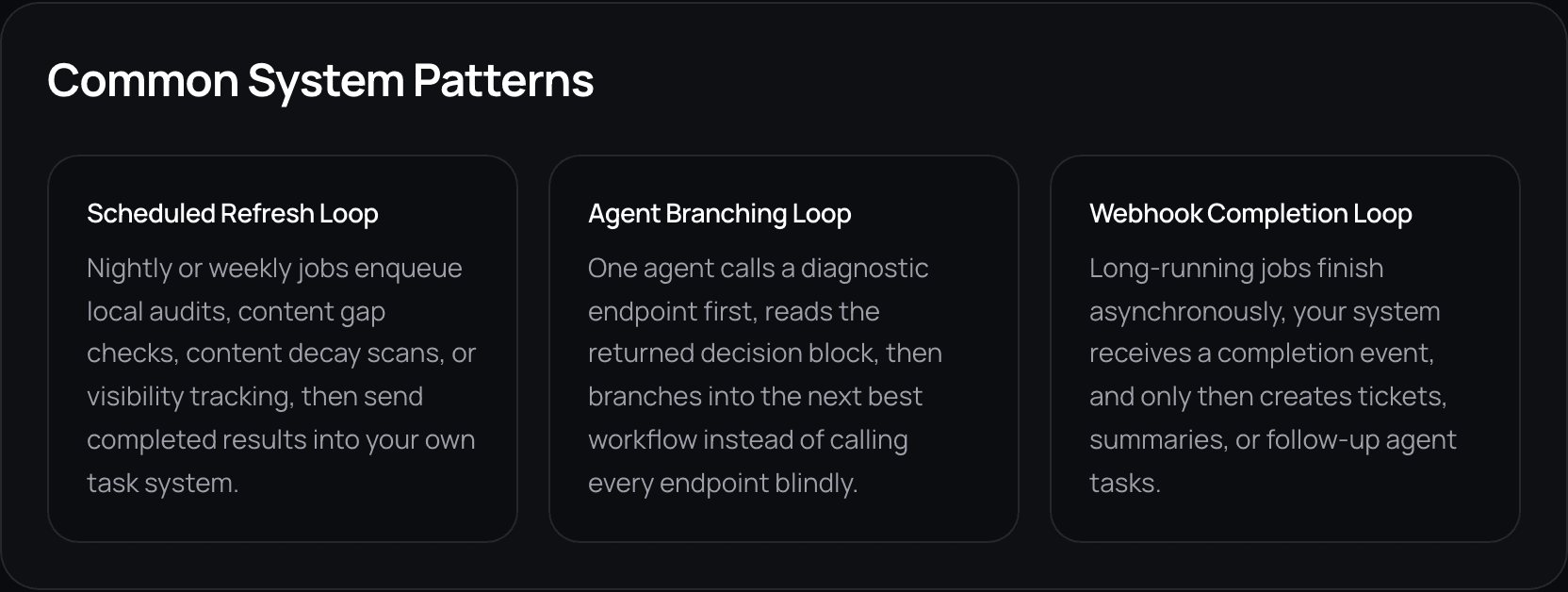

This is the part most teams underbuild. They take screenshots of AI answers, argue about whether the page appeared, and then lose the thread a week later. A real workflow should let you track the prompt set, the source set, and the follow-up action in one place.

That is where AgentSEO is useful. The goal is not just to prove visibility exists. It is to convert visibility checks into a system the team can rerun, compare, and act on.

Keep the workflow moving

Turn visibility gaps into a workflow you can actually inspect

Use AgentSEO to track prompt sets, source patterns, and follow-up actions instead of relying on scattered screenshots and guesswork.

Daniel Martin

Founder, AgentSEO

Inc. 5000 Honoree and founder behind AgentSEO and Joy Technologies. Daniel has helped 600+ B2B companies grow through search and now writes about practical SEO infrastructure for AI agents, MCP workflows, and REST-first execution systems.

FAQ

Questions teams usually ask next

Can a page be cited in AI search even if it does not rank first?

Yes. Strong rankings help, but citation depends on whether the page is useful as evidence for the answer. Pages with clearer definitions, stronger proof, or tighter relevance can still be cited even when they are not the top classic result.

Do I need special AI schema or an llms.txt file to get cited?

No. Google's current guidance says there are no additional technical requirements or special schema needed for AI Overviews or AI Mode beyond normal search eligibility and good SEO fundamentals.

What should I fix first if I rank but never get named?

Start with the page itself: tighten the answer, clarify the category fit, and put proof next to the claim. Then check whether the broader web reinforces the same story through mentions and comparisons.

More in this topic

AI visibility and AI search

Content

How to write comparison pages that AI search can actually cite

Comparison pages are becoming more important because AI answers compress generic research. The pages that still win tend to be specific, opinionated, and easy to extract.

Audit

A practical AI search readiness audit for B2B sites

Most B2B sites do not need a reinvention to become more AI-search ready. They need a faster audit for crawlability, extractability, positioning clarity, and proof.