What an AI search reporting dashboard should and should not include

Most AI search dashboards become vanity systems fast. The useful version separates discoverability, sourcing, citation, and downstream business movement instead of collapsing them into one score.

SEO, growth, and agency teams building a serious reporting layer for AI search

AI visibility / reporting

The fastest way to make AI visibility work look fake is to put everything into one magic number. Teams do this because they want a simple dashboard, but the result is a metric that hides the real operating questions.

A useful dashboard should help a team decide what changed, where the gap lives, and what to fix next. That means separating retrieval, citation, mention share, and business impact instead of pretending they are one thing.

Separate the layers first

Discoverability, source usage, explicit citations, and pipeline movement are different jobs.

The reporting model should reflect the system. A page may rank well and still never be cited. A brand may be mentioned in an answer without being linked as a source. A cited page may drive no meaningful pipeline. Those are all different signals.

Once you split the dashboard into layers, the team stops arguing about whether the metric is right and starts focusing on where the actual operational problem sits.

- Discoverability: does the page rank or surface for the relevant query set?

- Source usage: does the answer engine appear to use the page as evidence?

- Explicit citation or mention share: is the brand named, linked, or repeatedly surfaced?

- Outcome movement: does the visibility lead to visits, assisted conversions, or pipeline-relevant action?

What the dashboard should show

The right dashboard helps the team decide what changed and what deserves a response.

A useful dashboard should surface the monitored prompt set, the answer source pattern, the competitor overlap, and the recent movement over time. It should also make it easy to jump from the metric into the asset that needs work.

That is the key distinction. The dashboard is not there to impress a client or executive with AI-colored charts. It is there to route attention toward the next page, brief, or positioning fix.

Related reading

- Prompt groups tied to real buyer or operator intent.

- Page-level or topic-level source and citation movement.

- Competitor share around the same prompt set.

- A clear link from the metric to the page or workflow that needs action.

What the dashboard should not show

Avoid metrics that feel crisp but do not lead to better decisions.

I would avoid invented composite scores unless every component is visible and operationally useful on its own. I would also avoid dashboards that track only snapshots without preserving the prompt or source context behind them.

The job of the dashboard is not to turn uncertainty into false precision. It is to make the uncertainty legible enough that the team can act on it.

- One blended AI visibility score with no underlying breakdown.

- Prompt checks with no saved prompt set or source context.

- Charts that show movement but not which page or competitor caused it.

- Metrics that no one can connect to content, product, or pipeline decisions.

Design the dashboard around the operator, not just the executive view

Leadership needs a clean summary, but the working layer should still be built for action.

An executive summary is fine. In fact, it is useful. But the real dashboard should still be built for the operator who has to decide what changes next. That means preserving context, not just showing topline trends.

A good system can roll up to the CEO. It should not be designed only for the CEO. If it is, the team ends up with a reporting artifact rather than a working tool.

Where AgentSEO fits

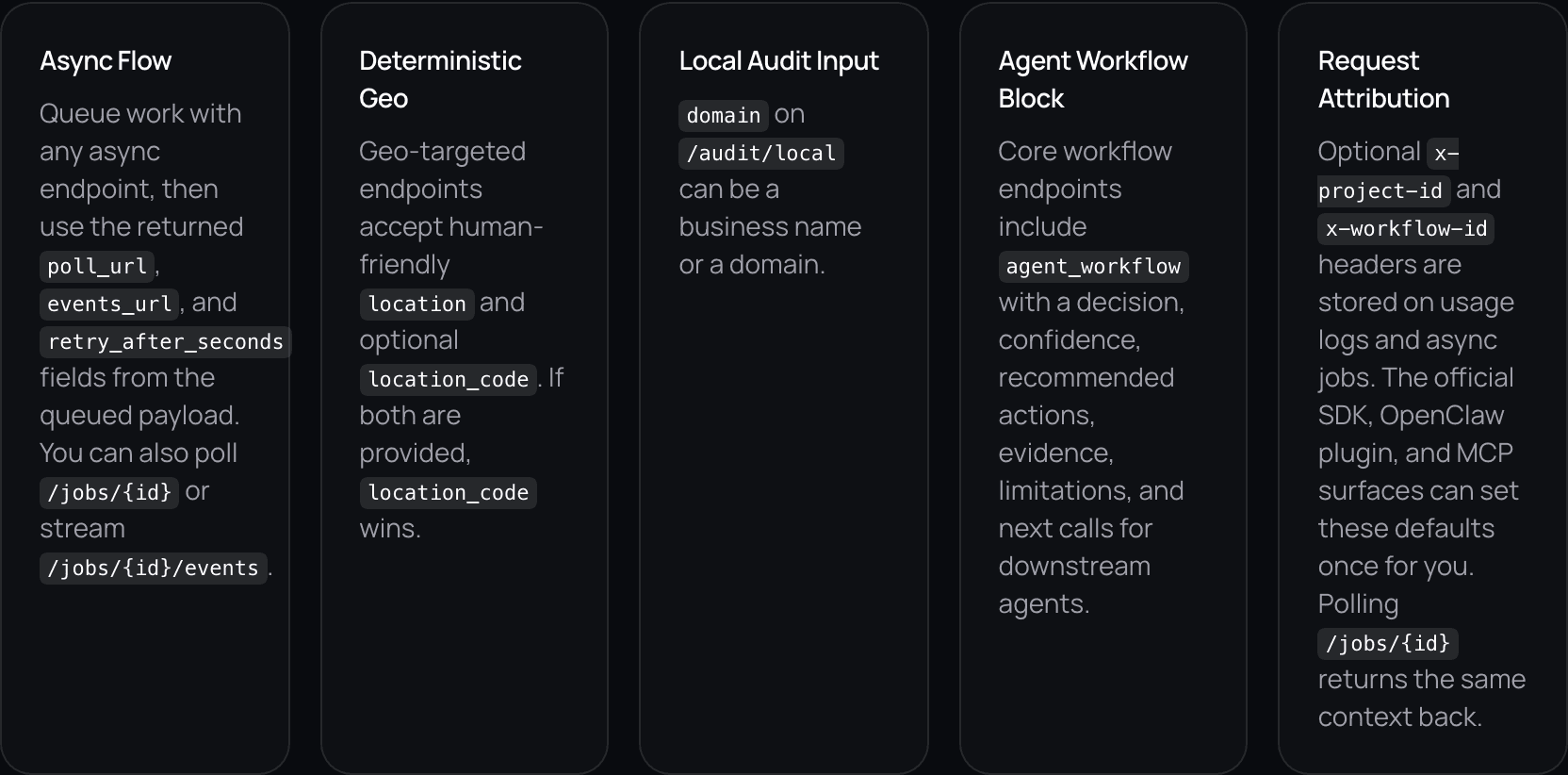

AgentSEO fits the underlying measurement and workflow layer behind a serious AI search dashboard.

The dashboard becomes much more useful when the inputs are compact, structured, and comparable over time. That is where AgentSEO helps. It provides a cleaner search-intelligence layer for prompt tracking, source analysis, and follow-up routing.

That means the dashboard can stay tied to real actions instead of becoming another reporting dead end.

Keep the workflow moving

Build a reporting layer that leads to action

AgentSEO helps teams track prompt groups, source patterns, and page-level movement so the dashboard becomes operational instead of decorative.

Daniel Martin

Founder, AgentSEO

Inc. 5000 Honoree and founder behind AgentSEO and Joy Technologies. Daniel has helped 600+ B2B companies grow through search and now writes about practical SEO infrastructure for AI agents, MCP workflows, and REST-first execution systems.

FAQ

Questions teams usually ask next

Should I use one AI visibility score in my dashboard?

Usually no. A single score hides too much. It is better to separate discoverability, citations, mentions, and downstream outcomes so the team can see where the real gap lives.

What is the biggest dashboard mistake right now?

Treating screenshots or one-off answer checks as if they were a reporting system. Without a saved prompt set, source context, and page-level action path, the dashboard becomes vanity.

Can executives still get a simple summary?

Yes. Roll up the working metrics into a clean summary view, but keep the operator layer underneath so the team can still debug and act on what changed.

More in this topic

AI visibility and AI search

AI visibility

Why you rank in Google but still are not cited in AI search

Ranking and citation are related, but they are not the same retrieval job. If your pages rank but never get named in AI answers, the usual gap is extractability, proof, or positioning clarity.

Content

How to write comparison pages that AI search can actually cite

Comparison pages are becoming more important because AI answers compress generic research. The pages that still win tend to be specific, opinionated, and easy to extract.